Abstract

Recent multimodal generation models have achieved remarkable progress on general-purpose generation tasks, yet continue to struggle with complex instructions and specialized downstream tasks. Inspired by the success of advanced agent frameworks such as Claude Code, we propose GEMS (Agent-Native Multimodal GEneration with Memory and Skills), a framework that pushes beyond the inherent limitations of foundational models on both general and downstream tasks. GEMS is built upon three core components. Agent Loop introduces a structured multi-agent framework that iteratively improves generation quality through closed-loop optimization. Agent Memory provides a persistent, trajectory-level memory that hierarchically stores both factual states and compressed experiential summaries, enabling a global view of the optimization process while reducing redundancy. Agent Skill offers an extensible collection of domain-specific expertise with on-demand loading, allowing the system to effectively handle diverse downstream applications. Across five mainstream tasks and four downstream tasks, evaluated on multiple generative backends, GEMS consistently achieves significant performance gains. Most notably, it enables a lightweight 6B model Z-Image-Turbo to surpass the state-of-the-art Nano Banana 2 on GenEval2, demonstrating the effectiveness of agent harness in extending model capabilities beyond their original limits.

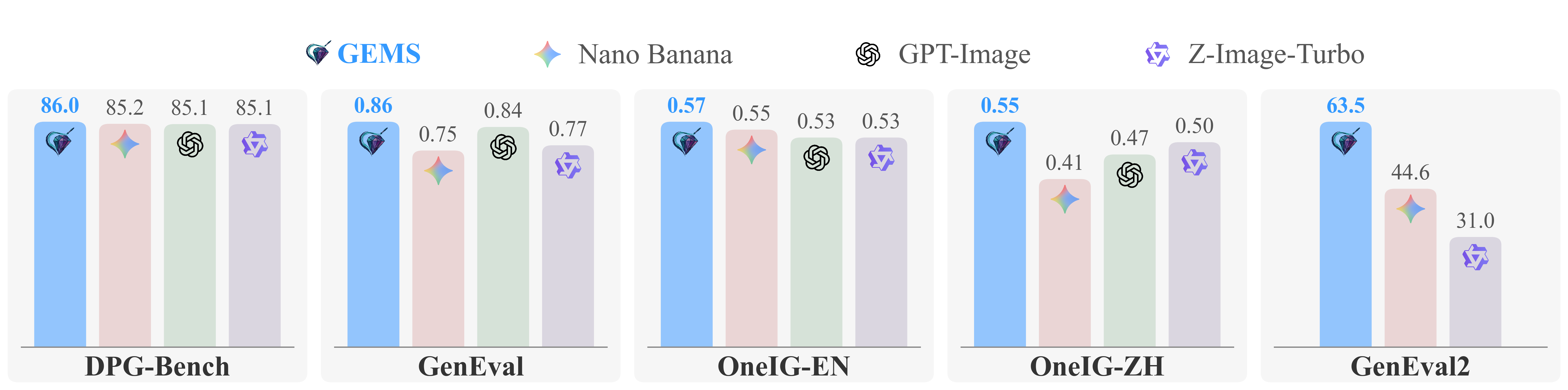

Overall Performance

Overall Performance: GEMS enables the lightweight 6B model Z-Image-Turbo to outperform prominent closed-source models such as Nano Banana and GPT-Image 1 across various tasks.

Three Core Pillars

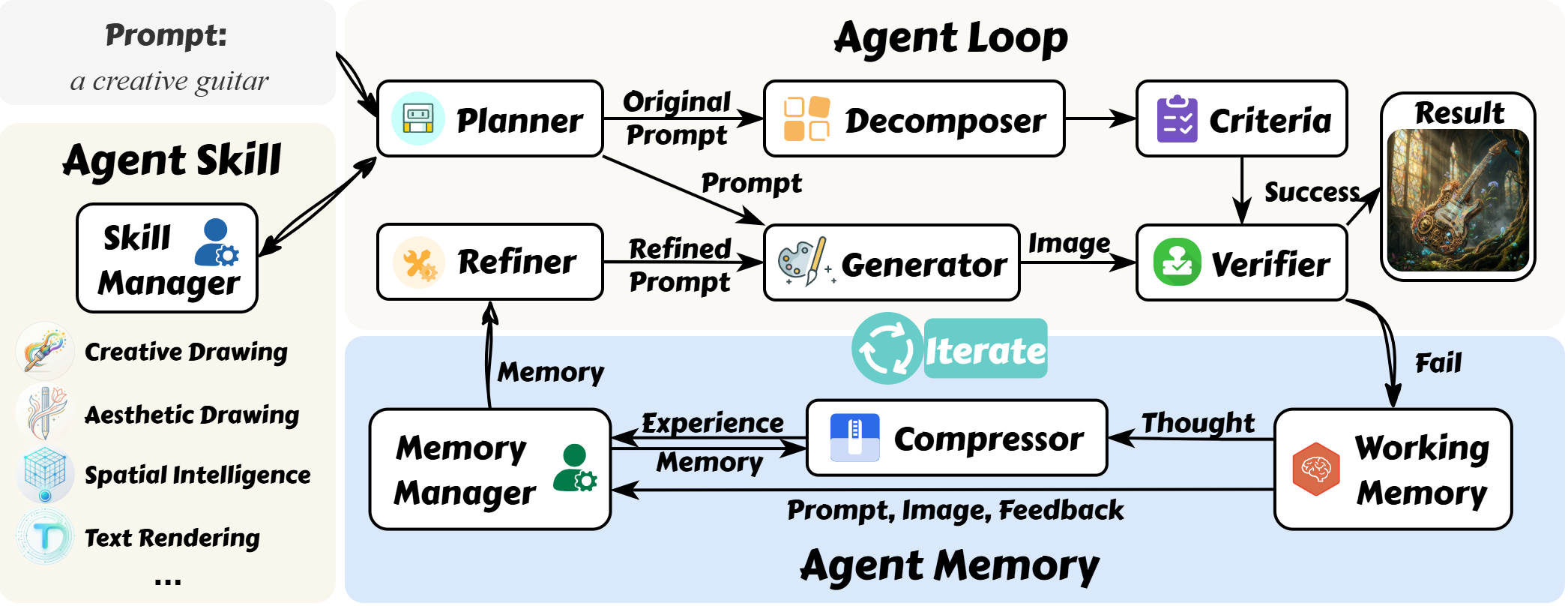

Agent Loop

Iteratively refines results through collaborative roles (Planner, Verifier, Refiner) to ensure high-fidelity performance on complex tasks.

Agent Memory

Persistent mechanism that resolves information redundancy through hierarchical compression of optimization trajectories.

Agent Skill

An extensible repository of domain-specific expertise utilized via on-demand loading to handle diverse downstream applications.

Methodology

Live Demo

Enter a prompt or select an example to try GEMS:

Citation

@article{he2026gems,

title={GEMS: Agent-Native Multimodal Generation with Memory and Skills},

author={He, Zefeng and Huang, Siyuan and Qu, Xiaoye and Li, Yafu and Zhu, Tong and Cheng, Yu and Yang, Yang},

journal={arXiv preprint arXiv:2603.28088},

year={2026}

} GEMS

GEMS